Adopting api integration ai automation tools sounds straightforward until you are staring at a legacy ERP, three different authentication schemas, and a board asking why the pilot is three months behind. For IT leaders at UK enterprises, the real challenge is not whether AI can add value. It is how to integrate it without creating new governance gaps, spiralling costs, or brittle connections that snap under production load. This guide walks you through every stage, from assessing your environment and implementing MCP governance to testing AI agent behaviour and selecting the right platform for your organisation's scale and risk appetite.

Table of Contents

- Understanding your AI automation and API integration needs

- Preparing your enterprise environment for AI integration

- Executing AI automation tool integration step by step

- Testing, verifying, and governing AI-powered API integrations

- Comparing leading AI automation integration platforms

- Rethinking AI automation platforms: lessons from enterprise deployments

- Realise efficient AI automation with GMD Automation

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI integration platforms | They manage secure connections, governance, and data translation so AI can automate workflows effectively. |

| Governance is essential | Centralised authentication and policy enforcement safeguard enterprise systems in AI automation. |

| MCP bridges preserve APIs | They allow incremental AI adoption without rewriting or weakening existing backend services. |

| Testing prevents failures | Comprehensive AI workflow testing reduces outages and regression noise for reliable automation. |

| Incremental approach works best | Start small and govern AI automation to scale securely and sustainably in enterprises. |

Understanding your AI automation and API integration needs

Before touching a single configuration, you need a clear picture of what you are actually building. AI automation tools do not operate in isolation. They query databases, trigger workflows, read documents, and write back to systems of record. That means every AI action is, at its core, an API call, and every API call carries authentication, rate limiting, and data governance implications.

AI integration platforms connect AI models and agents to data sources and services while managing authentication, data translation, and governance. That single sentence contains a lot of weight. It means the platform is not just a conduit. It is the enforcement point for your security posture.

For UK enterprises, this matters even more. GDPR obligations, sector-specific regulations such as FCA rules for financial services, and internal data classification policies all create requirements that a simple webhook-based integration will never satisfy. If your AI agent can query a customer data store without audit logging, you have a compliance problem, not just a technical one.

Common challenges that surface early in enterprise AI automation integration include:

- Siloed data that forces AI agents to work with incomplete context, producing unreliable outputs

- Governance blind spots where AI tool calls bypass existing API management policies

- Integration sprawl, where each new AI capability gets its own point-to-point connection

- Authentication fragmentation, particularly when mixing SaaS, on-premises, and cloud AI services

- Semantic gaps, where APIs expose data that AI agents cannot interpret without additional context layers

Understanding AI agent network examples from production deployments can sharpen your mental model before you start architecture decisions.

Pro Tip: Map every data source your AI agent will need to touch before you design a single integration. The resulting list will reveal governance gaps you did not know existed.

Thinking about AI business continuity strategies alongside your integration design is not premature. Resilience is far cheaper to build in than to retrofit.

Preparing your enterprise environment for AI integration

With your AI automation needs defined, the next step is readying your enterprise environment for secure and scalable integration.

Most UK enterprises already have a collection of REST APIs. The good news is that you do not need to rebuild them. The smarter approach is to layer governance and AI-readiness on top of what already exists. That is precisely what the Model Context Protocol (MCP) is designed to do.

MCP governance enforces authentication, authorisation, rate limiting, and threat detection centrally at a gateway layer, preserving enterprise control. In practical terms, this means your AI agent routes its tool calls through a governed MCP server rather than making raw API calls. The gateway intercepts every request, applies policy, logs the interaction, and passes it on. Your existing API management investments stay intact.

Here is a practical preparation sequence:

- Audit your existing APIs. Identify which REST endpoints are stable, well-documented, and suitable for AI agent consumption. Unstable or poorly versioned APIs will cause agent failures at the worst moments.

- Classify data sensitivity. Every API that exposes personal, financial, or regulated data needs explicit access controls before any AI agent touches it.

- Deploy an MCP gateway. Choose a gateway that can sit atop your existing API management layer without replacing it. This is the governance and authentication chokepoint for all AI tool calls.

- Define ownership. Security teams own the gateway policies. Developers own the tool definitions. IT leadership owns the lifecycle governance. Without clear ownership, policy drift is inevitable.

- Document tool schemas. MCP servers expose tools with descriptions that AI agents use to decide what to call. Poorly written descriptions produce incorrect agent behaviour, not bad code.

Key governance controls to configure at the gateway level:

- OAuth 2.0 or API key enforcement for every inbound AI agent call

- Rate limiting per agent identity, not just per IP

- Threat detection for prompt injection attempts targeting your APIs

- Audit logging with sufficient granularity to satisfy compliance requirements

For secure AI system preparation tips that go beyond the basics, production-tested guidance is worth reviewing before you finalise your architecture.

Pro Tip: Do not conflate your MCP server with your AI model. The MCP server is infrastructure. The model is a consumer of it. Keeping these conceptually separate prevents a common architecture mistake where governance logic ends up baked into the AI application layer, making it impossible to audit or update independently.

Executing AI automation tool integration step by step

Having prepared your environment, you can now proceed with the practical integration and automation tool deployment.

The days of writing bespoke integration code for every connection are largely behind us. The best API automation software today offers drag-and-drop workflow builders, AI-assisted configuration, and native connectors for hundreds of enterprise systems. What distinguishes a well-executed integration from a fragile one is not the tool. It is the discipline applied during configuration.

Oracle Integration enables AI assistant-driven integration creation, scheduling, error resolution, and native AI service incorporation with drag-and-drop ease. That capability matters because it means non-specialist developers can build and maintain production integrations, reducing the bottleneck on your most experienced engineers.

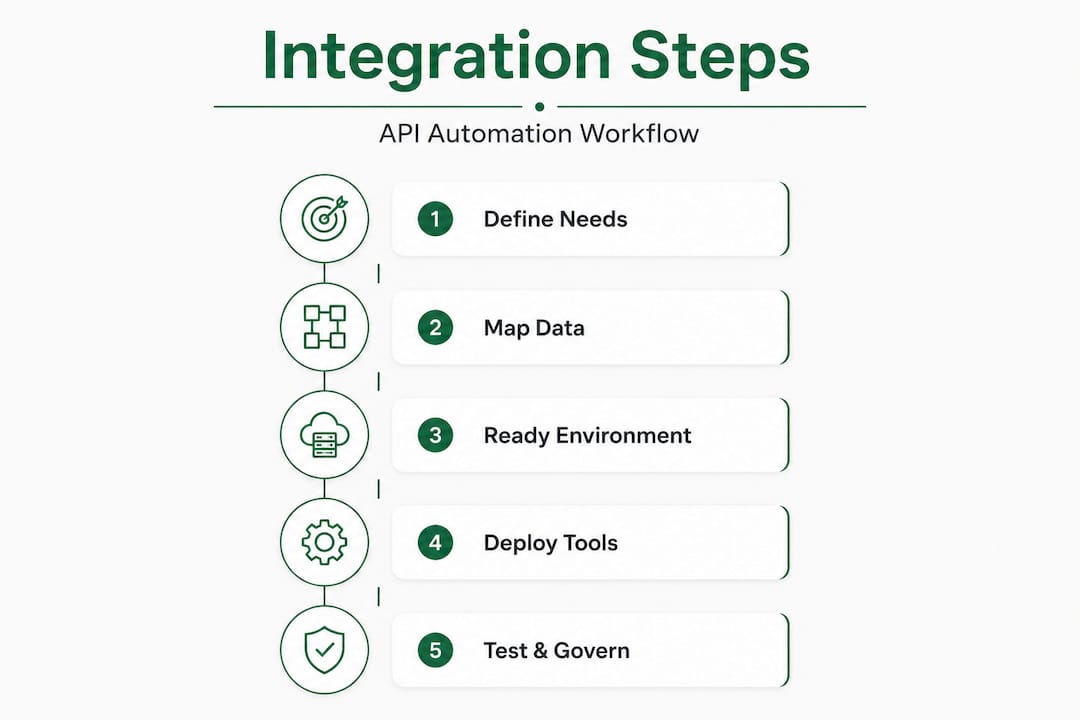

A practical execution sequence for AI automation tool deployment:

- Select your integration platform based on your governance requirements, existing technology stack, and team capability. Low-code platforms reduce time-to-value but require clear guardrails.

- Build your MCP bridge layer connecting existing APIs to the AI agent without rewriting services. A bridge translates existing REST endpoints into MCP-compatible tools.

- Connect your AI model. Whether you are using OpenAI, Azure OpenAI Service, or OCI AI services, configure the connection through your platform's native connector rather than direct API calls from your application code.

- Configure workflows. Define the trigger, the AI reasoning step, and the resulting API actions. Test each step in isolation before chaining them.

- Implement a unified API key manager such as ToolRouter if your agents are calling multiple external AI services. This prevents credential sprawl and gives you a single revocation point.

| Integration component | Purpose | Key configuration requirement |

|---|---|---|

| MCP gateway | Governed tool call routing | Policy enforcement, audit logging |

| AI model connector | Natural language reasoning | Credential isolation, token limits |

| REST API bridge | Legacy system access | Versioning, error handling |

| Workflow scheduler | Trigger management | Idempotency, retry logic |

| Unified key manager | Multi-tool credential control | Rotation policy, usage tracking |

For enterprise AI automation solutions already configured for UK compliance requirements, a platform built specifically for this context removes a significant amount of the groundwork described above.

Pro Tip: Build your first AI automation workflow around a process with clear, measurable outcomes and low blast radius if something goes wrong. Invoice matching or HR onboarding notifications are far safer starting points than customer-facing decisioning.

Testing, verifying, and governing AI-powered API integrations

With integrations in place, thorough testing and governance are critical for dependable AI automation in your enterprise.

Testing AI-driven workflows is categorically different from testing conventional integrations. A traditional API test checks whether a response matches an expected schema. An AI workflow test must also verify that the reasoning step produced the correct tool call sequence, handled ambiguous inputs gracefully, and did not hallucinate a parameter value into an API call it was never authorised to make.

Effective AI-powered API testing requires end-to-end evaluation, realistic scenarios, regression noise reduction, and human oversight for governance. Each of these is a distinct activity, not a single checkbox.

Key testing practices for AI automation integration:

- End-to-end workflow simulation covering happy paths, edge cases, and outright failure scenarios

- Service virtualisation to mimic downstream dependencies that are unavailable in test environments

- Regression noise management, because AI models can produce slightly different outputs for identical inputs, making traditional assertion-based testing unreliable without tolerances

- Human review gates for high-stakes workflows before they go to production

- Credential isolation testing to verify that an AI agent cannot access scopes beyond those explicitly granted

A structured testing sequence:

- Unit test individual tool definitions to confirm the AI agent invokes them with correct parameters

- Integration test the full workflow chain including API responses and AI reasoning transitions

- Simulate dependency outages to verify graceful degradation rather than silent failure

- Audit log review to confirm every AI action produces a traceable record

- Regression baseline capture so future model updates can be compared against known-good behaviour

| Test type | What it validates | Risk if skipped |

|---|---|---|

| Tool call accuracy | Correct parameter mapping | Data corruption, incorrect actions |

| Dependency simulation | Resilience to outages | Silent failures in production |

| Regression baseline | Consistency across model updates | Unpredictable behaviour drift |

| Audit log completeness | Compliance traceability | Regulatory exposure |

| Credential scope testing | Access boundary enforcement | Privilege escalation risk |

For AI integration governance frameworks that address UK-specific compliance requirements, the principles above apply whether you are in financial services, healthcare, or retail.

Pro Tip: Set up synthetic monitoring for your AI agent's tool call patterns in production. A sudden change in call frequency or error rate is often the first signal of a model update or a downstream API change, long before users report problems.

Comparing leading AI automation integration platforms

Understanding how to test and govern your AI integrations leads naturally into evaluating the best platforms to support your strategy.

No single platform is the right answer for every enterprise. The decision comes down to where your governance requirements are most acute, what your existing technology estate looks like, and how much you want to invest in configuration versus convention.

Oracle, MuleSoft, Workato, and ToolRouter offer distinct MCP and AI-supporting features suited for various enterprise needs. Here is how they compare across dimensions that matter to UK enterprises:

| Platform | MCP gateway support | AI assistant capability | Security controls | Best suited for |

|---|---|---|---|---|

| Oracle Integration | Native OCI AI services | AI-assisted workflow creation | OCI IAM, audit logging | Enterprises on Oracle infrastructure |

| MuleSoft Anypoint | Strong MCP bridge enforcement | Moderate, improving rapidly | Robust API governance | Complex hybrid environments |

| Workato | Growing MCP support | Otto AI teammate for workflows | Enterprise-grade SSO | Business-led automation |

| ToolRouter | Unified multi-tool key management | No-token-exposure architecture | API key centralisation | Multi-model AI API management |

Key considerations when selecting an AI automation platform for a UK enterprise:

- Governance depth matters more than feature breadth. A platform with 500 connectors but no gateway-level policy enforcement is a liability.

- Usage-based billing can spiral. Understand how costs scale with API call volume before committing, particularly if your AI agents are expected to run continuously.

- Native compliance tooling for UK data residency and GDPR audit requirements reduces your implementation burden considerably.

- Vendor support for UK enterprises varies. Verify that support SLAs and data processing agreements are appropriate for your sector.

Rethinking AI automation platforms: lessons from enterprise deployments

There is a pattern in enterprise AI automation projects that almost nobody talks about publicly: the bottleneck moves, it does not disappear. A team spends months integrating an AI-driven automation tool, and six weeks after go-live, the complaint is no longer "this process is too slow." It is "the AI keeps calling the wrong endpoint" or "we cannot tell what the agent did last Tuesday." The efficiency gain is real, but it surfaces a new class of operational problem that the original business case never anticipated.

The lesson is not to avoid AI automation. It is to govern it from day one, not as an afterthought once something breaks. Durable AI automation depends on governed tool layers atop stable REST APIs. MCP bridges add governance without rewriting existing APIs. That is not a technical footnote. It is the architecture philosophy that separates enterprises that scale AI successfully from those that accumulate technical debt at speed.

There is also a widespread overestimation of what "AI-powered" means in platform marketing. Many tools labelled AI-driven automation tools are simply workflow engines with an LLM bolted onto the trigger step. That is not inherently bad, but it means you cannot outsource reasoning to the platform. Your workflow design still needs to be sound, your API schemas still need to be clean, and your governance in AI automation still needs to be explicit.

The enterprises that get this right treat MCP adoption as incremental, not transformational. They add a bridge layer to existing API management, govern it tightly, automate one workflow at a time, and measure outcomes before expanding scope. That is slower than a big-bang deployment. It is also the only approach that produces a system your team can actually maintain and your auditors can actually understand.

Realise efficient AI automation with GMD Automation

Putting all of this into practice requires more than a plan. It requires a platform and a partner who understand UK enterprise requirements from the outset, not after a painful discovery phase.

GMD Automation's AI solutions are built specifically for UK enterprises that need enterprise-grade AI automation without significant upfront capital expenditure. Every deployment includes implementation, operation, maintenance, and ongoing optimisation under a predictable monthly subscription. That means your IT team gets fully prepared, governed AI systems that are compliant, secure, and ready to scale, without the hidden costs that typically accompany enterprise AI projects. If you are ready to explore what API integration AI automation tools can do for your organisation, GMD Automation offers a demo agent built on live deployment systems so you can see the capability before committing. Get in touch to arrange a consultation.

Frequently asked questions

What is the Model Context Protocol (MCP) and why is it important for AI automation?

MCP is a standard for agent-to-tool communication with governance for enterprise-scale deployments, enabling controlled and scalable AI automation without rewriting existing APIs. It is important because it gives enterprises a single, auditable control point for everything an AI agent is permitted to do.

How can UK enterprises ensure security when integrating AI automation tools?

Enterprises should implement gateway-based security that enforces authentication, authorisation, rate limiting, and threat detection centrally for all AI agent calls. Credential isolation and full audit logging are non-negotiable additions, particularly in regulated sectors.

What are common challenges when deploying AI automation with API integrations?

The most persistent challenges include governance blind spots and repeating point-to-point integrations that accumulate into unmanageable technical debt. Fragmented data silos and the absence of semantic context for AI agents also cause significant reliability problems in production.

How do AI integration platforms improve enterprise automation workflows?

AI integration platforms manage connectivity, governance enforcement, and data translation, enabling AI to focus on reasoning and decisioning rather than infrastructure plumbing. The result is faster workflow execution and a governed, auditable record of every AI action across your enterprise systems.