UK enterprises that have already deployed scalable AI automation are pulling ahead fast. One e-commerce retailer saved 450 hours per month through AI automation, cutting labour costs by £68,000 annually and achieving full payback in under two months. These are not outlier results. They reflect what becomes possible when you build automation on a solid, layered architecture rather than bolting tools together and hoping for the best. This guide gives IT decision-makers a clear technical roadmap for designing, deploying, and scaling AI automation that delivers measurable returns without excessive capital expenditure.

Table of Contents

- Prerequisites for scalable AI automation

- Core architecture layers and their roles

- Implementing robust MLOps and LLMOps

- Best practices: scaling and real-world deployment

- What most enterprises get wrong about scaling AI automation

- Accelerate your AI automation journey

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Adopt modular layers | Layered architectures let you manage data, models, and applications independently for seamless scaling. |

| Prioritise MLOps/LLMOps | Automated pipelines, versioning, and monitoring are essential to keep enterprise AI reliable and audit-ready. |

| Embrace low-capex SaaS | Cloud-based solutions minimise up-front investment and speed up returns for UK businesses. |

| Govern compliance early | Build in data governance and GDPR compliance from the ground up to avoid costly setbacks. |

| Learn from UK leaders | Case studies show automation can deliver rapid ROI and significant cost savings when best practices are followed. |

Prerequisites for scalable AI automation

With the strategic need established, let us clarify exactly what you need before starting your AI automation journey. Skipping this stage is one of the most expensive mistakes an enterprise can make. Organisations that rush to deploy AI without addressing foundational requirements typically face fragile systems, spiralling maintenance costs, and compliance exposure that can halt projects entirely.

Data readiness is non-negotiable. Your AI systems are only as reliable as the data feeding them. Before any model goes near production, you need clean, well-governed, consistently structured data pipelines. That means auditing existing data sources, resolving duplication and inconsistency issues, and establishing clear data ownership. For UK enterprises, GDPR compliance adds an additional layer: you must be able to demonstrate lawful basis for processing, data minimisation, and the ability to honour subject access requests, all of which require well-documented data flows from the outset.

A layered, modular architecture decouples your data layer, model layer, orchestration layer, and operational layer so that each component can scale independently. This matters enormously in practice. If your orchestration layer needs to handle ten times the workload, you should be able to scale it without rebuilding your data pipelines. Tight coupling between components is the architectural equivalent of building on sand.

| Prerequisite | Why it matters | Risk if skipped |

|---|---|---|

| Data governance | Ensures GDPR compliance and data quality | Regulatory fines, unreliable outputs |

| Modular platform | Enables independent scaling of components | Technical debt, costly rebuilds |

| MLOps processes | Maintains model reliability over time | Model drift, silent failures |

| SaaS-first approach | Minimises upfront capital expenditure | High capex, slow deployment |

| Security architecture | Protects sensitive enterprise data | Data breaches, reputational damage |

MLOps (machine learning operations) and LLMOps (large language model operations) processes must be established from day one, not retrofitted later. Many organisations treat operational tooling as something to worry about after deployment. That approach consistently produces systems that work in demos but degrade in production. Establishing CI/CD pipelines, model versioning, and monitoring frameworks before your first model goes live costs far less than rebuilding them under pressure six months later.

For most UK enterprises, a data-centric approach to AI governance is the most pragmatic starting point. This means prioritising data quality, feature engineering, and augmentation before investing heavily in model sophistication. A mediocre model trained on excellent data will consistently outperform a sophisticated model trained on poor data.

Pro Tip: Before committing to any AI platform, map your existing data flows and identify the three biggest quality or governance gaps. Resolving those gaps first will dramatically reduce the risk of your first production deployment.

A SaaS-first procurement strategy is particularly relevant for UK enterprises seeking to control upfront investment. Subscription-based AI platforms shift costs from capital expenditure to operational expenditure, making budgeting more predictable and reducing the financial risk of early-stage automation projects.

Core architecture layers and their roles

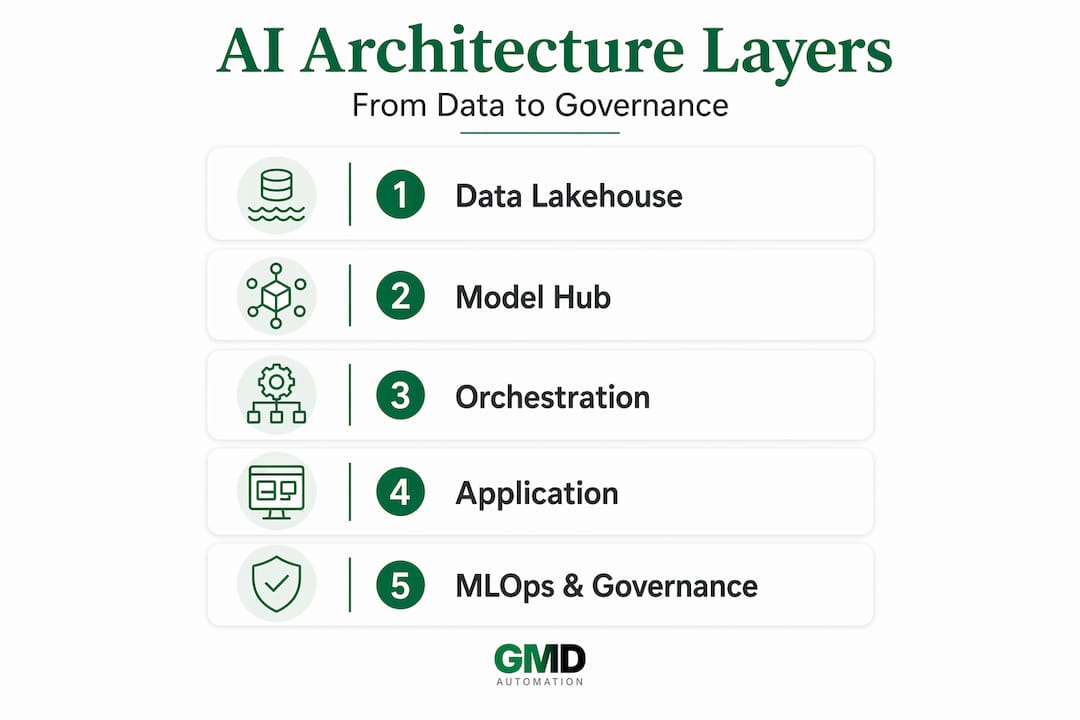

Now that prerequisites are established, let us explore how a layered architecture meets these operational needs in practice. Each layer serves a distinct function, and understanding those functions helps you make better procurement and design decisions.

Layer 1: Data and lakehouse foundation. This is where raw data from across your enterprise is ingested, stored, and prepared for AI workloads. A modern lakehouse architecture combines the flexibility of a data lake with the query performance of a data warehouse. For UK enterprises handling sensitive customer or financial data, this layer must enforce encryption at rest and in transit, role-based access controls, and comprehensive audit logging. Getting this layer right means every model above it has access to clean, governed, compliant data.

Layer 2: Model hub. This layer manages your portfolio of AI models, whether proprietary fine-tuned models, open-source models, or third-party LLMs accessed via API. The critical capability here is the ability to swap models without disrupting downstream applications. As the enterprise scalable generative AI architecture guide makes clear, decoupling models from applications is what makes long-term scalability achievable. When a better model becomes available, or when cost pressures require switching providers, a well-designed model hub makes that transition straightforward rather than traumatic.

Layer 3: Agent orchestration. This is where AI agents are coordinated to complete multi-step tasks. Orchestration frameworks manage which agent handles which task, how agents communicate, and how failures are handled gracefully. For enterprises automating complex workflows such as customer service escalation, document processing, or compliance checking, the orchestration layer is where most of the business logic lives. Flexibility here is essential: you need to be able to add new agents, modify workflows, and adjust routing rules without rebuilding the entire system.

Layer 4: Application layer. This is the interface between your AI automation and your end users or downstream systems. It includes APIs, user interfaces, integration connectors, and notification systems. Keeping this layer thin and well-documented makes it far easier to extend automation to new use cases as your programme matures.

Layer 5: MLOps and governance. The Gartner TRiSM framework (Trust, Risk, and Security Management) provides a useful model for embedding governance across all layers rather than treating it as a separate concern. Compliance, auditability, and ethical AI considerations should be built into each layer from the start, not added as a wrapper around a finished system.

| Architecture layer | Primary function | Scaling mechanism |

|---|---|---|

| Data and lakehouse | Data ingestion, storage, governance | Horizontal storage and compute scaling |

| Model hub | Model management and versioning | Swap or add models without app changes |

| Agent orchestration | Workflow coordination and routing | Add agents or adjust routing rules |

| Application layer | User and system interfaces | API gateway scaling, load balancing |

| MLOps and governance | Reliability, compliance, auditability | Automated pipelines, policy enforcement |

Explore how AI automation layers work together in practice to understand which components are most relevant to your specific operational context.

- Map your existing systems to each architecture layer before selecting platforms.

- Identify which layers you will build versus buy versus subscribe to as a service.

- Define the integration points between layers and document them as formal contracts.

- Establish governance policies for each layer before onboarding any production data.

- Plan your scaling triggers in advance so that growth does not catch your infrastructure unprepared.

Implementing robust MLOps and LLMOps

Having defined the foundation, the next critical dimension is the operational toolkit to keep your AI reliable and scalable over time. MLOps and LLMOps are not optional extras for large enterprises with dedicated research teams. They are the operational backbone that determines whether your AI automation remains reliable six months after deployment or quietly degrades into a liability.

Building scalable AI solutions requires CI/CD pipelines for models, rigorous versioning, continuous monitoring, model drift detection, and automated retraining triggers. Each of these deserves careful attention.

-

Establish CI/CD pipelines for models. Just as software development teams use continuous integration and delivery to ship code reliably, AI teams need equivalent pipelines for model training, validation, and deployment. This means automated testing of model outputs against defined quality thresholds before any model reaches production.

-

Implement model versioning from day one. Every model that enters production should have a unique version identifier, a record of its training data, and a documented rollback path. When something goes wrong in production, and at some point it will, you need to be able to revert to a known-good version within minutes rather than hours.

-

Deploy continuous monitoring. Production models must be monitored for output quality, latency, and error rates in real time. Set up dashboards that surface anomalies immediately rather than waiting for user complaints to reveal problems.

-

Build model drift detection. Model drift occurs when the statistical properties of your input data change over time, causing model performance to degrade. For UK enterprises processing customer data, seasonal patterns, regulatory changes, and market shifts can all introduce drift. Automated drift detection triggers retraining before degradation becomes visible to end users.

-

Configure A/B testing frameworks. Before rolling out a new model version to all users, test it against the incumbent on a subset of traffic. This allows you to validate improvements empirically rather than relying on offline evaluation metrics alone.

-

Define retraining triggers. Establish clear thresholds that automatically initiate retraining pipelines when drift or quality degradation is detected. Manual retraining processes are too slow and too inconsistent for production AI systems at enterprise scale.

"The difference between AI projects that scale and those that stall is almost always operational discipline, not model sophistication. Enterprises that invest in MLOps infrastructure early recover that investment many times over through reduced incident response costs and faster iteration cycles."

Pro Tip: Treat your MLOps pipeline as a product, not a project. Assign clear ownership, maintain documentation, and review it quarterly as your model portfolio grows.

Best practices: scaling and real-world deployment

With solid MLOps foundations, let us see how UK enterprises successfully scale AI automation and the concrete impact it brings. Real-world deployment is where architectural theory meets operational reality, and the evidence from UK organisations is compelling.

The £50 million value delivered by Lloyds Banking Group through 50-plus generative AI solutions deployed on Google Cloud Vertex AI demonstrates what becomes possible when a layered, modular approach is applied at scale. Lloyds did not achieve this by deploying a single monolithic AI system. They built a platform that allowed individual teams to deploy and iterate on use cases independently, within a governed framework.

The DVLA's AI-powered IVR system reduced call lengths across 900,000 monthly calls and automated 20,000 transfers, demonstrating that even highly regulated public sector organisations can achieve significant operational gains through well-architected AI automation.

Deployment best practices checklist:

- Pilot before scaling. Start with a single, well-defined use case that has clear success metrics. Prove the architecture works in production before extending it to additional workflows.

- Monitor from day one. Instrument your deployment thoroughly before go-live. Retrospective monitoring is far more expensive than proactive instrumentation.

- Use private endpoints for sensitive data. For UK enterprises processing personal or financial data, routing AI workloads through private cloud endpoints rather than public APIs reduces both security risk and GDPR exposure.

- Prefer SaaS platforms with subscription models. Zero upfront cost deployments reduce financial risk and allow you to validate ROI before committing to long-term infrastructure investment.

- Document your compliance posture at each stage. GDPR accountability requires you to demonstrate compliance, not merely assert it. Maintain records of data processing activities, model training datasets, and governance decisions throughout the deployment lifecycle.

- Build feedback loops. Capture user feedback and operational metrics systematically. Use that data to prioritise improvements and identify emerging failure modes before they become incidents.

The 450 hours per month saved by a UK e-commerce retailer, representing a 73% reduction in manual processing time, was achieved through a structured pilot-to-scale approach with robust monitoring in place from the outset. The payback period of 1.5 to 2.3 months is achievable precisely because the architecture was designed for reliability, not just initial deployment.

What most enterprises get wrong about scaling AI automation

Here is the uncomfortable reality that most AI architecture guides will not tell you directly: the majority of enterprise AI scaling failures are not caused by choosing the wrong model or the wrong platform. They are caused by organisational and architectural decisions made in the first few weeks of a project that quietly undermine everything that follows.

The most common mistake is believing that custom development delivers better scalability than modular, SaaS-first approaches. In practice, the opposite is true. Custom-built systems accumulate technical debt rapidly, require specialist knowledge to maintain, and become increasingly expensive to modify as requirements evolve. Modular platforms built on well-defined interfaces allow you to swap components, add capabilities, and respond to changing requirements without rebuilding from scratch. The enterprises achieving the most impressive AI scaling results are almost universally those that resisted the temptation to build everything bespoke.

The second most common mistake is treating MLOps and governance as afterthoughts. We have seen organisations deploy genuinely impressive AI capabilities in production, only to watch them degrade silently over three to six months because nobody built monitoring and retraining processes into the initial design. By the time the degradation becomes visible through user complaints or audit findings, the cost of remediation is multiples of what it would have cost to build it correctly from the start.

GDPR and ethical AI compliance deserve particular emphasis for UK enterprises. The regulatory environment is not static. The ICO's guidance on AI continues to evolve, and organisations that treat compliance as a checkbox exercise rather than an ongoing governance commitment will find themselves exposed as requirements tighten. Building compliance into your architecture from the start, through data lineage tracking, model explainability, and documented decision-making processes, is far less disruptive than retrofitting it later.

The pragmatic vision for UK enterprises is one of continuous improvement and ecosystem thinking. Your AI automation architecture should be designed to evolve, not to be finished. Build for pluggability: new models, new data sources, new orchestration capabilities should be addable without system-wide disruption. Treat your AI programme as a living platform rather than a series of discrete projects, and you will compound the value of each deployment into the next.

Accelerate your AI automation journey

Putting these architectural principles into practice requires more than a good plan. It requires the right platform, the right operational support, and the confidence that your deployment will be compliant, reliable, and genuinely scalable from day one.

GMD Automation delivers enterprise-grade AI automation systems tailored specifically for UK businesses, with zero upfront costs and a transparent monthly subscription that covers implementation, operation, maintenance, and ongoing optimisation. Every solution is built on the layered, modular architecture principles outlined in this guide, with GDPR compliance and security built in from the start. If you are ready to move from architecture theory to measurable operational results, GMD Automation provides the platform and the expertise to get there rapidly and without unnecessary risk.

Frequently asked questions

What is the fastest way for UK enterprises to see ROI from AI automation?

Deploying SaaS-based AI automation with a layered architecture and robust MLOps processes enables payback within 1.5 to 2.3 months, as demonstrated in UK case studies. Starting with a clearly scoped pilot use case and measuring results rigorously accelerates time to value significantly.

Which AI architecture layer should I prioritise first?

Start with a robust, AI-ready data foundation, since all other layers depend on it for reliability and compliance. A data-centric approach focused on feature engineering and data quality delivers more scalable results than prioritising model sophistication before your data is ready.

How do MLOps and LLMOps reduce operational risks?

They enforce continuous testing, monitoring, and rapid rollback for models, ensuring system reliability and regulatory compliance. CI/CD pipelines and drift detection catch degradation before it affects users or triggers compliance issues.

What are common deployment mistakes in enterprise AI automation?

Over-customising solutions and neglecting governance or compliance are the two most damaging mistakes. Ignoring data-centric governance from the outset consistently leads to scaling failures and costly remediation efforts down the line.